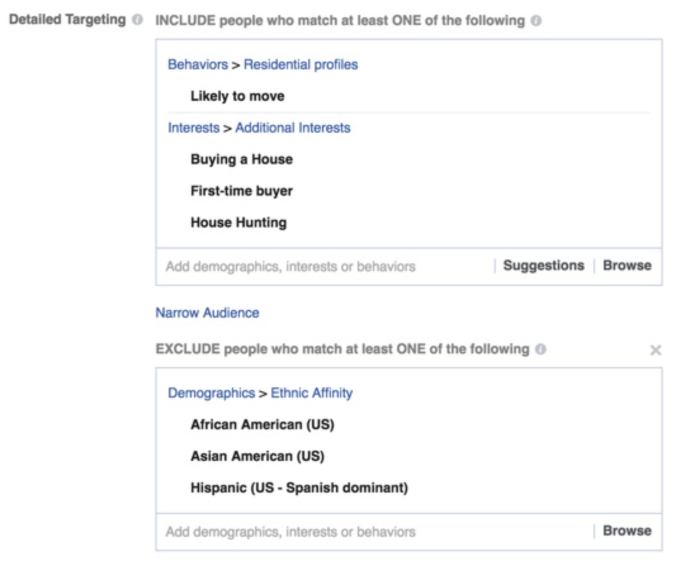

Reporting today from ProPublica shows that Facebook allows buyers of demographically targeted ads to exclude users based on race. Oops, sorry, that’s “ethnic affinity”, which is totally a different thing:

He said Facebook began offering the “Ethnic Affinity” categories within the past two years as part of a “multicultural advertising” effort.

Satterfield added that the “Ethnic Affinity” is not the same as race — which Facebook does not ask its members about. Facebook assigns members an “Ethnic Affinity” based on pages and posts they have liked or engaged with on Facebook.

Uh huh. You can keep your Orwellian euphemisms when your targeting options look like this:

A common complaint about targeted ads online is that they’re “creepy”. Your browsing and buying behavior is tracked with cookies, and you’re hit with an uncanny ad later. Sometimes the algorithms are adorably dopey, like when you buy a thing and then get ads for the same thing for the rest of the month. Or when you accidentally glance at an irrelevant product once and it haunts your ads for eternity.

But this is a useful reminder that creepy and funny are privileged problems to have. If instead you are in a situation where your demographics make you a target for discrimination and prejudice, tracking and targeting are more serious problems. Not that the erosion of privacy isn’t serious, but the problems interact with stereotypes in even more distressing ways.

The immediate issue with this conduct by Facebook is that it appears, as ProPublica report, to be at least possibly in contravention of the Fair Housing Act—“whites only”. So this serves to show that existing legal frameworks governing appropriate conduct in advertising must be interpreted anew in the realm of surgical targeting and monetization of user data in online social networks. This is before we even get to the ethical garbage fire beneath.

I have noticed around the internet today a vocal minority responding to this story with a hearty “so what?”, noting that demographic targeting is nothing new in the ad business. Well, yes. I realize that if I advertise on a particular TV show I am not getting a random sample of the population. But I am certainly not buying the idea that there is no moral, psychological, or—wait we just covered this—legal distinction between one and the other. Indeed, again ProPublica has us preempted as they recall the thoroughness with which the New York Times vets housing ads for racial discrimination following their successful prosecution in 1989 for exactly this type of thing.

This is a great example of one of Cathy O’Neil’s Weapons of Math Destruction, algorithms with unintended consequences. In a world in which we are increasingly harangued into accepting “big data” and statistics as the modern fact, we are also cornered into accepting algorithms as the modern decision. Passing the buck of right and wrong onto numbers and their opaque crunching is unacceptable. At a minimum I would propose that it’s far from sufficient to trust the writers of code to also serve as stewards of public ethics.

My own research has included work on privacy regulation in online markets and targeting in social networks, and so this story is quite squarely up my alley. For example, in work with Avi Goldfarb and Catherine Tucker, I have argued that policy efforts to address users’ privacy concerns may be subject to the unintended consequence of empowering incumbents and decreasing competition in data-driven industries. There we took “privacy concerns” in the abstract; well, here are your privacy concerns.

One typical conception of targeting in the economics framework is to say it helps the seller to tailor the product to the buyer. On the one hand, the seller may then be able to extract more cash from you by personalizing the price and the product to what they believe you can afford. On the other hand, the “fit” between product and person (and its predictability) may improve, leading customers to enjoy a better outcome. Or both hands—it could well be a win-win in this sense.

But these rosy tales of mutually beneficial trade aren’t so rosy if there is a rotten whiff of prejudice on the air, and prejudice is not an easy fit into our usual economics framework. Often economists overlook the real prevalence and danger of discrimination precisely because our methodology treats individual decision-makers as bundles of preferences and (valiantly) takes for granted that everyone should be treated equally. This is a sense in which the unrealism of our models is not necessarily just an innocuous thing but can leave us caught flat-footed when legal and ethical problems are stuck in our blind spot.

There are certainly literatures and traditions in economics studying discrimination (see stratification economics, for example). But our typical methodology is perhaps not ideal for addressing these issues. A gain is a gain and a loss is a loss. But some hurt is not like other hurt. We are close then to the old chestnut of whether my distaste for something you do is an externality you impose upon me, deserving of public consideration, or a case in which I should simply mind my own business. On this question politics is fought, and economics cannot really deal with that gray area.

Where was I? Oh yes. In sum, it would probably be good if Facebook would stop doing this.