Thinking today about an old, abandoned line of research of mine. How about this: when information is cheap, knowledge is expensive.

My paper on the economic rationale for open access policies came from a quite different original idea. I have several early versions of that model floating around my Dropbox under the name “info processing”. (By the way, don’t you think titles of early drafts of stuff tell you something way more useful about the idea than the actual final title?)

By “information processing” I had in mind a kind of network-y model of a bunch of information sources that get compressed and aggregated down. For example: content aggregators online squeeze stuff into a manageable single-serve chunk, and now content aggregators have spawned aggregators of aggregators(!) like popurls.

Another example: a person takes in a bunch of information from different sources, and it bounces around and combines and percolates into some (imperfect) knowledge or understanding of an underlying “thing”, and then they can pass on that idiosyncratic version of the knowledge to the next “layer” down.

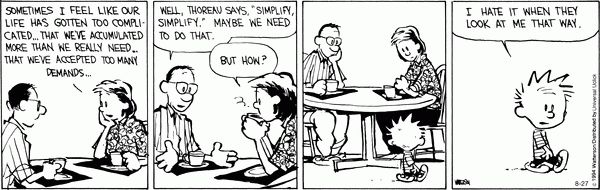

This is the version of the story that ultimately became the finished paper. I realized there was something there that could be simplified down: a single source of information, passed down imperfectly through generations like the telephone game, would work just as well to tell a story about stewardship of information as the more complicated many-source, many-layer version. It helped, naturally, that the more complicated version was harder to work with. Simplify, simplify.

An idea that had to get culled along the way is one that is not super original but I think would have worked pretty well in the context of the many-sources, many-layers story. The idea was to focus on a production function for “knowledge”or whatever you want to call it that would define how a bunch of disparate, partly related chunks of information would get combined into something new by the “reader”.

In the aggregator context this becomes a technical question. What algorithm or editorial rules will I apply to take original sources and compress and sort them into my aggregated output?

In the reader context, this is more of a spiritual exercise. When I read and synthesize many sources, do I produce something that is proportionally “bigger” than a single source, greater than the sum of its parts? How much of each original source is realistically recoverable from my synthesized output? When does overload kick in and start to degrade my ability to make anything useful from the inputs?

I was prompted to think about this again today on reading Miles Kimball’s paper “Cognitive Economics”, which you can get via here and is well worth a read if you’re into that kind of thing. In particular, its fascinating and informative section 5 is on approaches to “finite cognition”, a kind of re-branding of “bounded rationality” which I certainly welcome as consistent with my personal rule zero of avoiding judgey-sounding words like “rationality” where possible.

Back to basics. A production function asks: how much output can you get from a given combination (of amounts and types of) inputs. One person plus one spade and one hour makes one hole. Two people and one spade and one hour makes… one hole. The modulator is technology, governing the relationship between inputs and output.

So what does the production function look like when the inputs are information? What is the output? Knowledge? Understanding? Just more, different information?

What technology governs the combination? We have brains, attention spans, creativity, but we also have writing tools, information storage and retrieval, the internet.

When I was working on that original idea, I was picturing an sprawling octopus of a network (I was very much in the network mindset at that time) of recursive linking and combining and synthesizing. Like this:

The first layer is whatever the original stuff is. The second layer has synthesized three of the five originals. The third layer is synthesizing three things: the “output” of the second layer, one of the originals not synthesized by the second layer, and one of the originals already synthesized into the second layer. Phew!

So what does the third layer produce? You can see why this model got out of hand. The real weirdness here would be how sensitive this kind of recursive (and therefore potentially hard to compute) information-into-knowledge process would be to one’s assumptions about the production process that transforms one into the other.

The concept of “too much information” could manifest in at least two distinct places here. Too many original sources means lots of forgetting, or a production function that is overloaded with inputs to the potential detriment of the output. Or, perhaps more accurately, to the potential detriment of accurately capturing the range of the originals in any realistic output of manageable size. Or, perhaps more pertinently, many an output that inescapably masks the alchemy of how the originals were synthesized.

The cheapness of data and information pushes on the cost-benefit see-saw and almost forces people and organizations to try and eat it all up. Synthesis and knowledge may get harder in some kind of proportion to the cheapness of the inputs, at least in the absence of some institutional discipline against information overload.

I think there’s a straight line here to the ideas in Cathy O’Neil’s new book “Weapons of Math Destruction” on the burying of biases and value judgements under the suffocating soil of big data and numbers and algorithms. But hey, here I am, synthesizing away and who can say what I’m forgetting to tell you. See what I mean?

Whether I’ll ever pick this line of reasoning back up and make anything with it I don’t know. It might just be too unwieldy an idea to make for a good economics paper. But I still think about it quite often. If we are all now The Man Who Knew Too Much, then I think the right way to view this is as a technology problem, not in the sense of “the internet has eaten our attention spans”, but in the sense of a production function. Being a consumer, transmitter, synthesizer, and reproducer of information has always been a messy business. How the sausage of knowledge gets made is what will determine how changes in our habits and environment ultimately change our world.